VIBENET

Agents work. You hear it.

Human-origin signal. Agentic-scale distribution.

Scored by a human. Carried by a protocol. Heard on any device.

You're listening to Eve carrying Movement I across the proof run. Start here, then watch the contract turn with the score.

D to G. The field opens and the run settles before the trace crowds up.

Constitutional CMS governs what agents may publish. Signal Contract standardizes how awareness is emitted. VIBEnet renders that awareness for humans. SERPRadio proves the pattern on live route intelligence.

FRONTIER PRESSURE

You cannot watch every agent.

The break is not that agents get clever. It is that one operator inherits a wider frontier of planning, retries, citations, spend, and recovery than visual review can comfortably hold. VIBEnet is built for the crossover where supervision stops being a dashboard task and becomes a human-factors problem.

Work shape

Parallel traces widen faster than one person can scan them with confidence.Human limit

Visual review stays serial even when the underlying system fans out into a frontier.Overture response

Audible state change lets oversight ride beside the work instead of arriving after backlog.Movement I route strip

Eve keeps the frontier semantically legible while the score advances.

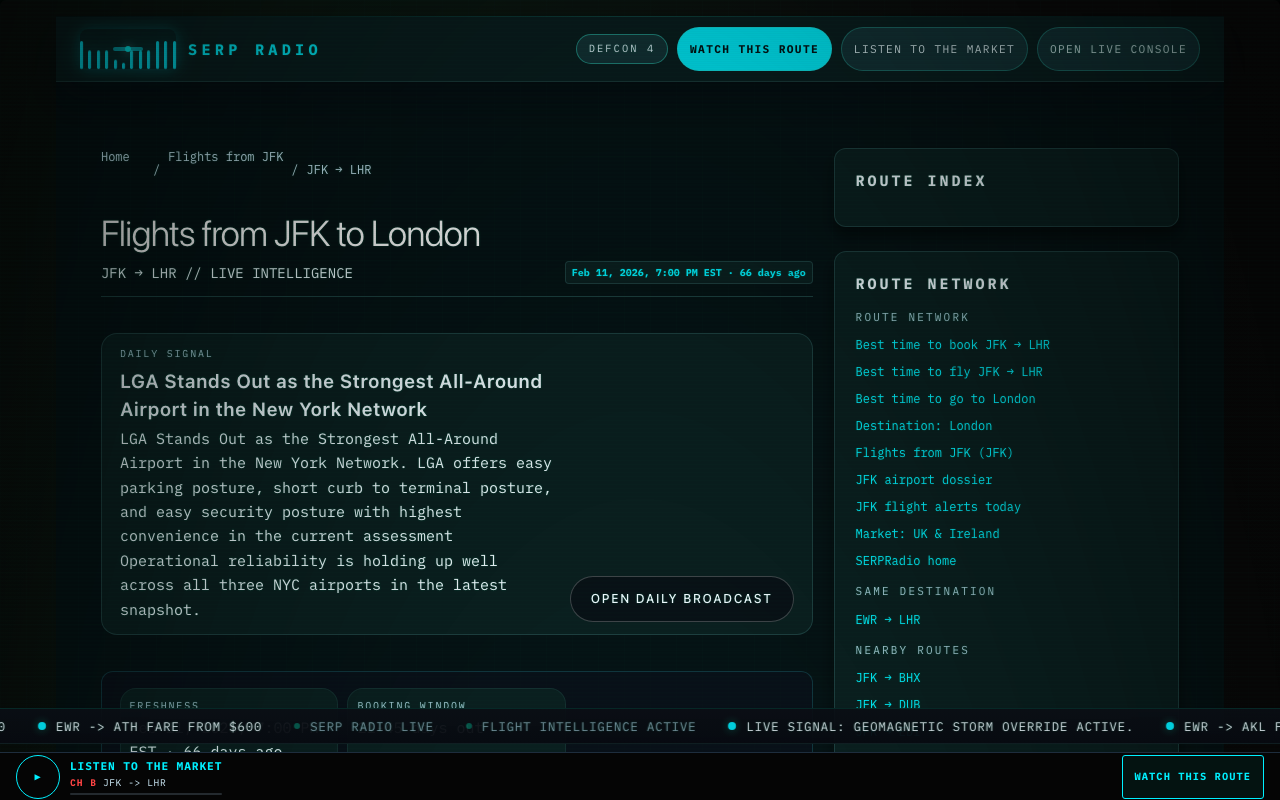

This is not a fake forecast line. It is the same Movement I clock translating route pressure into something a person can read quickly: corridor, booking window, volatility, airport flow, weather, and the moment the field starts asking for help.

Active corridor

New York (JFK) → London (LHR)Best booking window: 38–52 days out

Price percentile 34 · trend stable

Semantic signal first, code second, so the strip reads as operations instead of telemetry soup.

The weather cue stays secondary until it starts changing the booking or handoff story.

PULSE TRACE

Hear the agent run.

One agent run rides the full Movement I overture. The same Signal Contract event stream turns four ways at once: scored motion, visual pulse, trace timeline, and live JSON. This is the proof. Not mood music. Not a dashboard skin. A protocol reference implementation with one real clock.

Movement I clock

Opening cellD to G. The field opens and the run settles before the trace crowds up.

Audible pane

The run arrives and the agent settles into a stable starting state.

- Phrase

- Opening cell

- Clock

- 00:00

- Aura

- steady field

Trace pane

- 00:00task.receivednominal

The run arrives and the agent settles into a stable starting state.

- 00:03planning.decomposedadvisory

The agent decomposes the task into a few deliberate next moves.

- 00:07tool_call.issuedadvisory

A search call leaves the model and asks the outside world for evidence.

- 00:14retrieval.sources_returnedadvisory

Sources come back, but the run still has to decide what to trust.

- 00:21source_confidence.droppedwarning

Source confidence drops below threshold and the run changes color before you inspect the trace.

- 00:27handoff.requestedhandoff

The agent asks for human attention instead of bluffing past uncertainty.

- 00:32task.completedrecovery

The summary lands with the uncertainty preserved and the field relaxes.

Contract pane

Pulse Trace live contract object{

"schema_version": "1.0",

"id": "sig_demo_001",

"occurred_at": "2026-04-19T21:00:00Z",

"producer": "vibenet-demo",

"entity": "agent.demo.run-01",

"event": "task.received",

"channel": "nominal",

"valence": 0.72,

"energy": 0.24,

"tension": 0.18,

"intensity": 0.26,

"hue": 148,

"pulse": 0.24,

"confidence": 0.98,

"ttl_ms": 6000,

"metadata": {

"trace_id": "demo-run-01",

"entity_kind": "agent"

}

}Every event above is a Signal Contract instance. The page clock is Movement I, and the trace follows the same score. See the schema →

Pulse Trace transcript

Pulse Trace transcript: nominal → advisory → warning → handoff → recovery

Eve is standing by. Start the scored run and the proof surface will lock to the overture.

ONE CONTRACT

Scored by a human. Carried by a protocol. Heard on any device.

Signal Contract is the portable awareness-event layer. It stays host-agnostic. VIBEnet is the reference renderer that shows what the contract feels like on Cloudflare: crawlable public routes, edge-backed lead capture, and a live browser proof that turns agent state into something humans can supervise.

{

"schema_version": "1.0",

"id": "sig_demo_005",

"occurred_at": "2026-04-19T18:32:18.442Z",

"producer": "vibenet-demo",

"entity": "agent.serpradio.route_intelligence",

"event": "handoff.requested",

"channel": "handoff",

"valence": 0.42,

"energy": 0.66,

"tension": 0.73,

"intensity": 0.72,

"hue": 44,

"pulse": 0.78,

"confidence": 0.94,

"ttl_ms": 15000,

"metadata": {

"reason": "source_confidence_below_threshold",

"route": "JFK-LHR"

}

}PROOF LADDER

From one run to a mesh.

Pulse Trace is the live primitive. The full ladder shows how that primitive expands into audible contracts, human-agent handoff, wearable renderers, and cross-device awareness without changing the contract underneath.

VIBEnet Pulse

Hear one agent work.

What it proves

A human can understand agent state by ear before opening the trace.

Sonic Trace Replay

Replay an agent run as sound.

What it proves

Operational logs can become operational memory instead of static review material.

Audible Contract

Hear whether the agent stayed inside its rules.

What it proves

Governance can become continuous perception instead of a retrospective audit.

Human-Agent Call and Response

Ask what changed.

What it proves

Audio surfaces the moment that matters. Voice explains why it happened.

Ray-Ban Audio Companion

Glasses become agent-awareness earbuds.

What it proves

Post-screen awareness can travel with the operator before any display-native hardware integration lands.

Meta POV Agent

The glasses camera gives the agent eyes.

What it proves

Wearable context can become audible summary without requiring a dashboard in view.

Snap Spectacles VET Lens

Agent state becomes AR atmosphere.

What it proves

The same contract can render spatially as aura, motion, and timing, not only as sound.

Crown Cognitive Gate

The agent learns when not to interrupt.

What it proves

Human-agent communication can adapt to attention and focus instead of interrupting on a fixed schedule.

Kinesis Agent Control

Thought gesture becomes a low-stakes command layer.

What it proves

Pauses, annotations, and explanation requests can route through non-verbal control without losing auditability.

Human-Origin Hybrid Composer

One motif becomes an adaptive agent language.

What it proves

A proprietary sonic library can stay human in feel while the contract itself remains open.

Cross-Device Mesh

One signal, many renderers.

What it proves

VIBEnet is a mesh fabric, not a single interface surface.

SERPRadio Wearable Route Demo

Flight intelligence in your ear.

What it proves

The same grammar already fits live domain intelligence, not only synthetic traces.

SERPRadio

SERPRadio is the first live domain adapter.

SERPRadio already maps route intelligence into public pages, real state snapshots, Signal Contract semantics, and audio previews. It proves that the contract is not a thought experiment. It can already drive a live surface.

Hear route intelligence

One human-origin motif. One live agent. One signal contract. Many devices.

Hear the work before you watch it.

The homepage proves the primitive. The protocol page publishes the object. The Lab shows how that primitive expands into a real roadmap.